What Do AI Model Numbers Mean? 3B, 7B, 20B…

When size doesn’t tell the whole story

Billions of what, exactly?

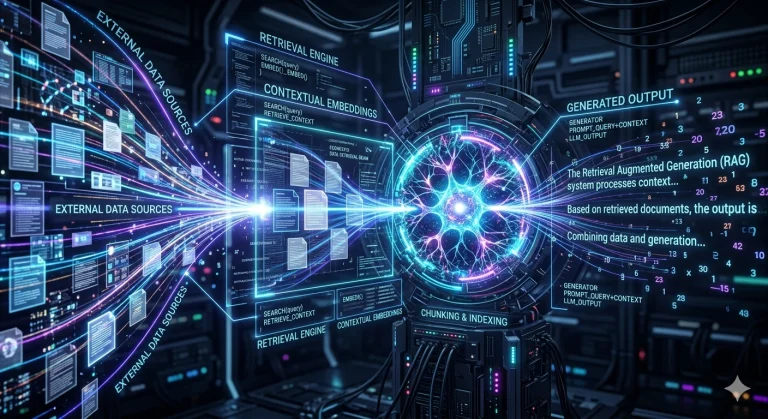

The “B” stands for “billions” — and it refers to the number of parameters in the model. A parameter is, in simplified terms, a connection learned during the model’s training on billions of texts. The more parameters, the more general knowledge the model has potentially “memorised”.

A 3B model has 3 billion parameters. A 70B model has seventy times more. On paper, the larger one seems better. In practice, it’s far more nuanced.

Why a larger model doesn’t guarantee better answers

The quality of a response depends on three main factors. Data quality accounts for around 45% of the result — meaning the documents, regulations, and professional content the system consults to answer. Prompt quality, i.e. how the question is phrased, contributes around 28%. The model itself accounts for only around 22%.

In other words: a well-configured 7B model, fed with precise professional data and queried with well-formed questions, will regularly outperform a poorly-used 70B generalist model.

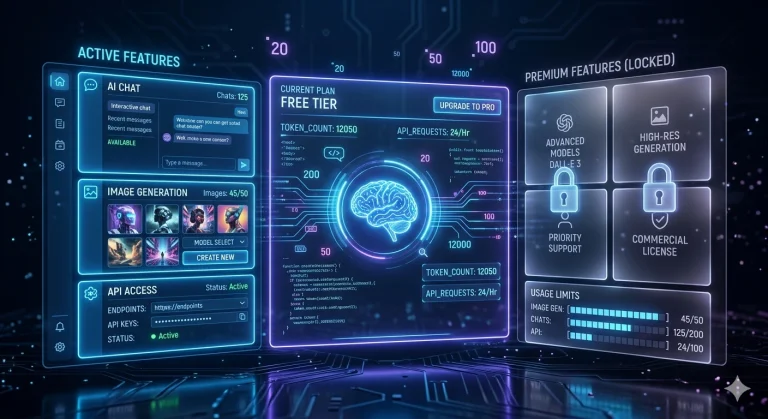

Hardware constraints: a criterion often overlooked

Large models require considerable resources. A 70B model typically needs 40 to 80 GB of RAM — which implies powerful servers, a cloud connection, and significant costs. A 7B or 8B model can run on a desktop mini PC, locally, without internet, with entirely satisfactory performance for targeted professional use.

This is precisely the choice made by ArkeoAI: prioritise a compact model, optimised for our clients’ professional data, rather than a massive cloud-dependent model.

Key takeaway

More parameters does not automatically mean more relevance. What matters is the fit between the model, the data, and the use case. A well-designed system with a modest model will always outperform a poorly-directed giant model.

Power lies in precision, not in size.