Why Do AI Systems Invent Answers?

Understanding hallucinations in language models

You may have already heard the term “hallucination” applied to artificial intelligence. A model that cites a law article that does not exist. An assistant that calculates a redundancy payment at €107,000 when the real amount is €2,625. A fabricated case law reference, written with the same confidence as a genuine Supreme Court ruling.

This is not a bug developers forgot to fix. It is a direct consequence of how these systems work — and understanding it means knowing how to protect yourself.

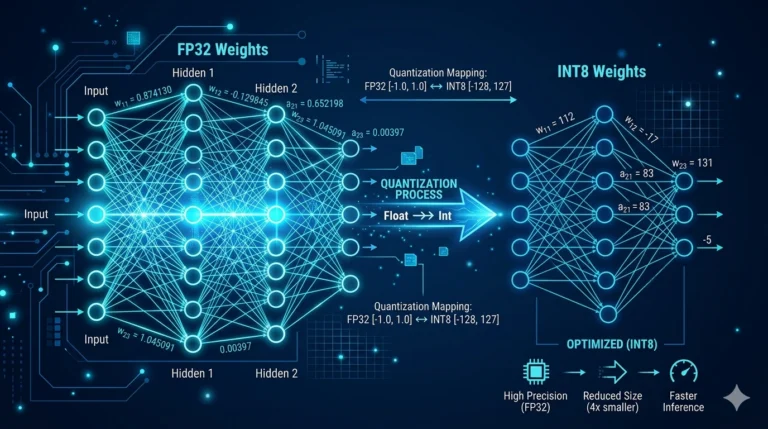

A language model does not “know”. It predicts.

A large language model — whether it is called GPT, Claude, Gemma, or Llama — was not designed to store facts like a database. It was trained on billions of texts to learn one thing: which sequence of words is most likely to follow another sequence of words.

When you ask it a question, it does not search for the answer in a file. It generates a response that resembles what a well-formed human text would say in response to that question. Most of the time, this response is correct — because the texts it was trained on contained many correct answers. But sometimes, it generates something plausible that is not true.

This is precisely what a hallucination is: a fluent, well-constructed, confident response — that is false.

Why it happens: three concrete mechanisms

1. The model fills in the gaps

When information is missing from what the model “sees” at the moment of answering, it does not say “I don’t know”. It continues generating plausible text. That is its deep nature.

Concrete example: during our tests, we asked a model to calculate the statutory redundancy payment for an employee with 5 years’ service and a gross salary of €2,100. The correct formula — one quarter of a month per year of service — was present in the documentary index, but in a separate regulatory article the system had not retrieved. The model, not seeing the formula, invented one: it multiplied the salary by the employee’s job coefficient (185), divided by 173.6, and announced a result of €94,650. The calculation appeared coherent. It was entirely wrong.

2. The model confuses related concepts

Models were trained on texts that frequently mention the same terms together. When a question activates several related concepts, the model may mix them.

Concrete example: when asked about the procedure for dismissal for serious misconduct, a model cited articles L.1235-1 and L.1235-2 — which actually relate to dismissal without real and serious cause, i.e. the penalty for unfair dismissal before employment tribunals. These articles do concern dismissal, compensation, and procedure — but not at all what was asked. The model associated the right keywords with the wrong articles.

3. The model invents sources to justify its claims

This is the most misleading form of hallucination. The model generates not only a false claim, but also a reference that appears to validate it — an article number, a case law ruling, an administrative decision — that simply does not exist.

Concrete example: during a test on the validity of a non-competition clause, a model cited “decision no. 2014-1400 QBL” of the Supreme Court to justify that a financial consideration of 30% would be insufficient. This decision does not exist. The number, the format, the reference — everything was invented, but everything resembled a genuine case law ruling.

Why this is particularly dangerous in regulated professions

In general use — writing an email, summarising an article, brainstorming ideas — a hallucination is often detectable and rarely serious. You re-read, verify, correct.

In law, medicine, accounting, or insurance, it is different. A miscalculated compensation can lead to litigation. An invented law article can support an erroneous decision. A contract clause drafted on a fictional legal basis can be challenged in court.

The professional who uses an AI tool without understanding its limits takes a real risk — not only for their client, but for their own liability.

What RAG changes — and what it doesn’t

A RAG system — like the one used by ArkeoAI — considerably reduces the risk of hallucination by forcing the model to respond from real, indexed, verifiable documents. The model no longer answers from general memory: it answers from what it is shown.

But RAG is not absolute protection. If documents are poorly segmented and related information ends up in separate blocks, the model can still fill in the gaps — and hallucinate.

This is why the quality of a RAG system is measured not only by the model used, but by how the document base has been built, organised, and maintained. An average model with a well-structured document base will give better results than a powerful model with a poorly indexed base.

The right posture towards AI: confidence and vigilance

The goal is not to be afraid of AI, nor to reject it. These tools are genuinely useful — for quickly finding information in a large file, for reformulating a document, for identifying relevant clauses in a contract, for saving time on repetitive tasks.

The right posture is that of a professional using a calculator: they trust the result for routine operations, but they know how to recognise when a figure does not seem reasonable — and they verify.

With AI, it is the same. The answer may be correct. It may also be beautifully written and completely false. The difference between the two is your professional judgement — and a system designed to make that judgement possible.

This is precisely what ArkeoAI gives you: a powerful tool, on your own data, in your own environment, with the transparency needed for you to remain in control of the decision.