What Is a Token?

It is the basic unit an AI uses to read, understand, and write.

You may have noticed that AI services talk about “tokens”: token limits, cost per token, context window in tokens… The word appears everywhere, but it is rarely explained clearly.

Yet understanding what a token is means understanding how a language model perceives text — and why it sometimes behaves in surprising ways. You don’t need to be a computer scientist: a good metaphor is enough.

The starting problem: AI reads neither letters nor words

When you read a sentence, your brain naturally breaks the text into words, groups of words, and ideas. This is an intuitive process, learned from childhood.

A language model cannot do this directly. It only understands numbers — everything must be converted into numbers before entering the model. The question is therefore: how to convert text into numbers as efficiently as possible?

Two naive approaches present themselves:

- Letter by letter — simple, but very inefficient. The word “bonjour” becomes 7 distinct units, with no link between them. The model would need to learn that b-o-n-j-o-u-r form a whole.

- Word by word — more logical, but problematic. There are hundreds of thousands of different words in any language, not counting conjugations, plurals, proper nouns, and foreign words. The dictionary becomes unmanageable.

The solution adopted by modern LLMs is an elegant compromise: tokens. Variable-size text fragments, larger than a single letter, often smaller than a whole word.

A token, concretely

A token is a piece of text that the model has learned to recognise as a unit. This piece can be:

- A common whole word: “house”, “contract”, “client”

- Part of a word: the suffix “-ation” in “termination”, “notification”, “validation”

- A word with its punctuation: “it’s”, “don’t”, “won’t”

- A space followed by a word: ” hello” (with the space in front)

- A single character for rare symbols: “§”, “€”, “²”

In practice, for text in English or French, one token represents on average 3 to 5 characters — approximately 0.75 of a word. Put another way: 100 tokens correspond to roughly 75 words, or a short paragraph.

Concrete example: the sentence “The lease ends on 31 December.” will be split into something like: [The] [lease] [ends] [on] [31] [December] [.] — approximately 7 tokens. But the word “termination” might be split into [termin] [ation] — 2 tokens — because the model did not learn it as a sufficiently frequent whole unit.

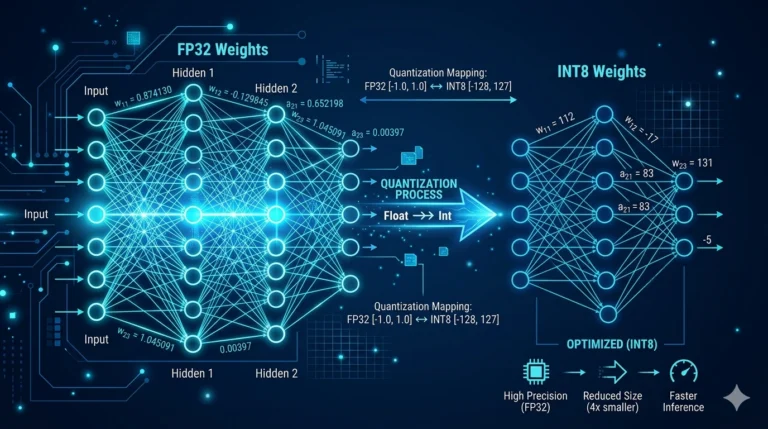

How is this splitting decided?

Training the tokenizer

Before a language model is even trained, its creators build a tokenizer — a splitting tool — by analysing enormous volumes of text. The algorithm looks for the most frequent and useful character sequences, and assigns each one a unique numerical identifier.

The result is a fixed vocabulary of tokens, typically between 30,000 and 100,000 entries. Each token has its number. When you send a sentence to the model, the tokenizer converts it into a list of these numbers — and it is this list of numbers that the model actually processes.

Why some words cost more tokens than others

Words that are very common in the language on which the model was trained tend to be single tokens. Rare, technical, foreign, or very long words are often split into several tokens.

This has practical consequences:

- A legal text dense with technical terms will consume more tokens than a conversational text of the same length.

- Models trained primarily on English tokenise French less efficiently — a French word may require more tokens than its English equivalent.

- Proper nouns, acronyms, and invented words are often split into unusual fragments, which can complicate their processing by the model.

The context window: the model’s immediate memory

Every language model has a limit on the number of tokens it can process at once. This is called the context window.

This window includes everything: your question, the conversation history, the documents you have provided, and the response being generated. Once this limit is reached, the model can no longer “see” what lies beyond.

To give common orders of magnitude:

- A model with a 4,096-token window can process around 3,000 words — a few pages of a document.

- A 32,000-token window corresponds to around 25,000 words — a long report or a series of exchanges.

- The most recent models reach 128,000 tokens or more — the equivalent of an entire novel.

This is why, in systems like ArkeoAI, documents are not sent to the model in bulk. Only the most relevant excerpts — identified by the RAG system — are transmitted, so as not to saturate the context window with irrelevant text.

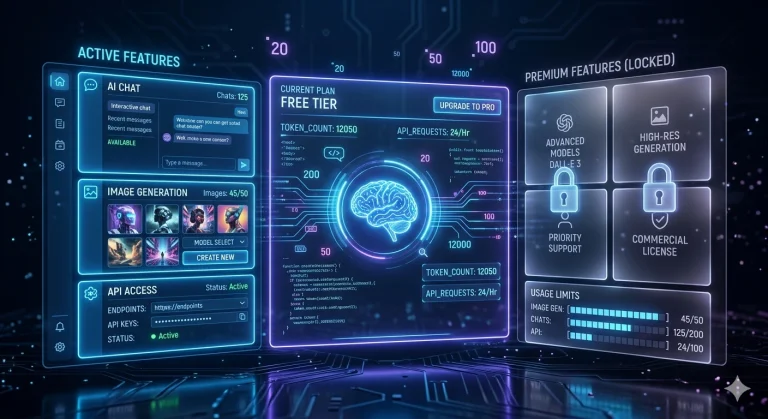

Tokens and billing: why it matters

For online AI services (ChatGPT, Claude via API, etc.), cost is calculated per token. Every input token (your question + documents) and every output token (the generated response) is counted and billed.

In a professional context with significant volumes — dozens of consultations per day on long documents — this counting becomes economically significant. A 50-page document can represent 30,000 to 50,000 tokens in input alone.

This is one of the concrete advantages of a local solution like ArkeoAI: no per-token billing. The model runs on your own hardware, without a meter running with every exchange.

In summary

A token is the fundamental unit of text perception for a language model — neither a letter nor necessarily a whole word, but a text fragment to which a number corresponds in the model’s vocabulary.

Everything you write to the AI is first converted into a sequence of tokens. Everything the AI replies is generated token by token — literally, the model “chooses” the most probable next token at each step, until the end of the response.

Understanding tokens means understanding the real limits of an LLM: it does not think in sentences, it calculates probabilities over sequences of fragments. Which is already remarkable — provided you know how to speak to it.