What is a context window?

The secret of AI’s “short-term memory”

The first time someone hears that an artificial intelligence model has a “context window,” they usually nod and move on. Then, a few weeks later, when the AI system starts behaving strangely – forgetting something mentioned five minutes ago, or confusing the beginning of a document with the end – the question suddenly becomes very relevant: what exactly is this, and why does it matter?

Simply put: the context window is the amount of text an AI can “see” and process at one time. No more, no less. It is the model’s active working memory – the space that holds everything it can take into account at any given moment.

The librarian who can only see one desk’s worth of material

Imagine the following scene. You walk into a library and are greeted by an exceptionally knowledgeable, experienced librarian. This person has accumulated vast knowledge over decades: they know the laws, the literature, the precedents. When you ask a question, they answer immediately and precisely.

There is, however, one special rule: they can only consider one desk’s worth of material at a time. The desk fits, say, twenty-five pages of text. If the information needed for your question fits on that desk – the document, the previous questions, the background material – they work perfectly. But if you try to place a three-hundred-page contract on the desk, either the beginning falls off, or the end does, and the librarian can only see what remains.

That desk is the context window.

Tokens and sizes: the world of numbers

The size of a context window is generally measured in tokens. A token is not the same as a word: it is roughly four characters, meaning an average word is about one and a half to two tokens. A thousand tokens is approximately eight hundred words – about the length of a dense newspaper article.

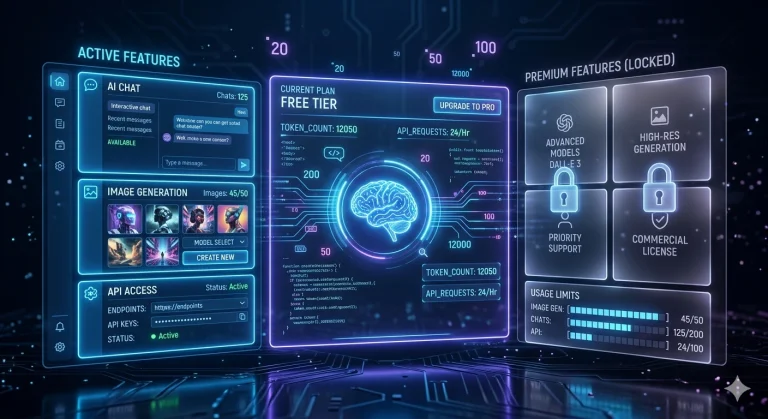

Different models work with different context window sizes:

• Smaller, local models: 4,000-8,000 tokens (approx. 3,000–6,000 words)

• Mid-range models: 32,000-128,000 tokens

• Larger cloud models: over 200,000 tokens

At first, the numbers seem abstract. To make it tangible: a standard legal contract typically runs between five and fifteen thousand words. A full year’s accounting file – including year-end summaries, invoices, and correspondence – can easily exceed one hundred thousand words.

What happens when something “falls out” of the window?

This is the point where many users first encounter a seemingly inexplicable phenomenon. The AI system suddenly doesn’t “remember” something mentioned not long ago. Or it contradicts itself. Or it analyses the end of a document while the beginning no longer fits in its active memory.

It is important to understand: this is not an error and not carelessness. It is a physical limitation of the model. What it cannot see on the desk, it simply cannot take into account – not because it doesn’t want to, but because that information is no longer present for it.

Different models handle this situation differently. Some drop the beginning of the window (the oldest parts in time), some compress the middle. In every case, the result is that something is lost.

Why does the size of the window matter?

The size of the context window directly determines what types of tasks a model is suited for.

Advantage of a large window: long documents can be analysed at once; complex, multi-step tasks can be completed without the AI “forgetting” what was said at the beginning.

Disadvantage of a large window: processing is slower, requires more computational capacity, and for locally-run offline models this represents a serious hardware burden.

Advantage of a small window: faster, more efficient, lower hardware requirements, ideal for local deployment.

Disadvantage of a small window: with longer documents, context may be lost; the model only sees a fragment of the whole.

In reality, however, most daily professional tasks do not require a gigantic context window. This is the point where it is worth thinking realistically.

In the case of ArkeoAI: why is this not a bottleneck?

The ArkeoAI system was not designed for lengthy document analysis. Its primary use case is not uploading a two-hundred-page case file and expecting the model to read, interpret, and summarise it in a single step.

The strength of ArkeoAI lies in precise, targeted answers.

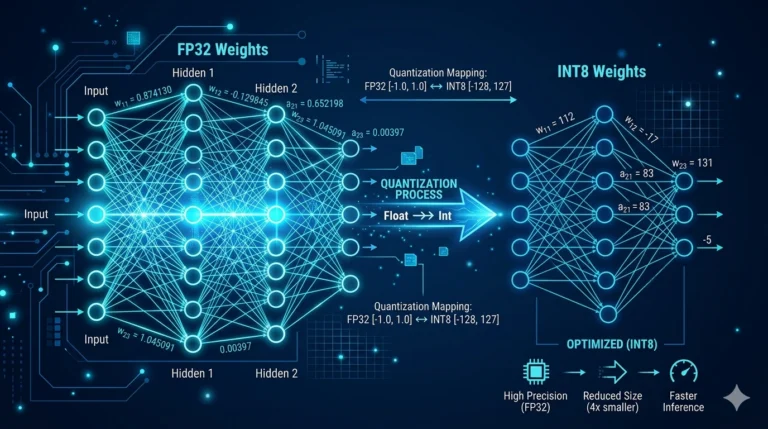

The system works as follows: your office’s own documents — templates, past cases, internal policies, client data — are pre-processed and stored in a structured way. When you ask a question, the model does not “read through” the entire database; it retrieves only the relevant excerpts and responds based on those. This is the essence of RAG (Retrieval-Augmented Generation) technology.

The consequence is that what enters the context window is not a three-hundred-page document, but the question and the few genuinely relevant paragraphs. This fits comfortably. The model sees everything it needs to see – no more, no less.

ArkeoAI therefore works reliably not because it has a vast context window, but because it intelligently filters what enters the window. That is the difference between raw computational power and intelligent design.

What does this mean in practice?

A lawyer looking for an answer about a specific contractual clause probably does not want a two-hour analysis. An accountant asking for confirmation on the accounting classification of a particular invoice does not expect a scientific treatise. A medical assistant searching a patient’s file for a specific piece of data wants exactly that one piece of data.

ArkeoAI is precisely suited to these tasks. The answer is accurate, fast, and built on your office’s own data – not on foreign data stored in the cloud, not on sensitive information sent over the internet.

The size of the context window is a technical parameter. In the case of ArkeoAI, this parameter is handled appropriately – and you do not need to worry about it.