What Is an MoE Model?

The Secret Architecture Behind the AI Tools You Already Use

If you have heard that large AI models are made up of experts but had no idea what that actually means, this article is for you. Written for accountants, lawyers, doctors, and anyone who uses these tools without being an engineer.

The Kitchen Analogy: Where It All Starts

Imagine walking into a restaurant with a single chef. This chef knows a little about everything: grilling, baking, fish, pastry decoration. When you order a schnitzel, they mentally run through every technique they know before they even pick up a pan. Versatile, yes. Fast, not really.

Now picture a second restaurant: eight chefs, each a specialist in a different area. The moment you order the schnitzel, the system immediately knows to call on the meat specialist. The others keep resting or serve another table. Faster, more efficient, and the result is better.

This is roughly how an MoE model works. MoE stands for Mixture of Experts: instead of a single all-knowing neural network handling every question, multiple smaller, specialized sub-networks are available, and only the most relevant ones are activated at any given moment.

An Old Idea That AI Rediscovered

The MoE concept is not a product of the recent AI boom. The core principle was described by Robert Jacobs and colleagues as far back as 1991 in an academic paper whose central argument was straightforward: different tasks are better handled by different learned units, with a gating network deciding which one to call.

For decades the idea stayed largely theoretical. Early computers and networks were not capable of handling such architectures efficiently. Then, in the late 2010s, as large language models began their explosive rise, the concept resurfaced, this time with real momentum.

The turning point came in 2017, when the Google Brain research team published a paper titled Outrageously Large Neural Networks. It introduced the first modern sparsely-gated MoE layer, built on a simple but powerful insight: you can build enormous models without activating every single parameter for every single calculation.

How It Actually Works: The Gatekeeper

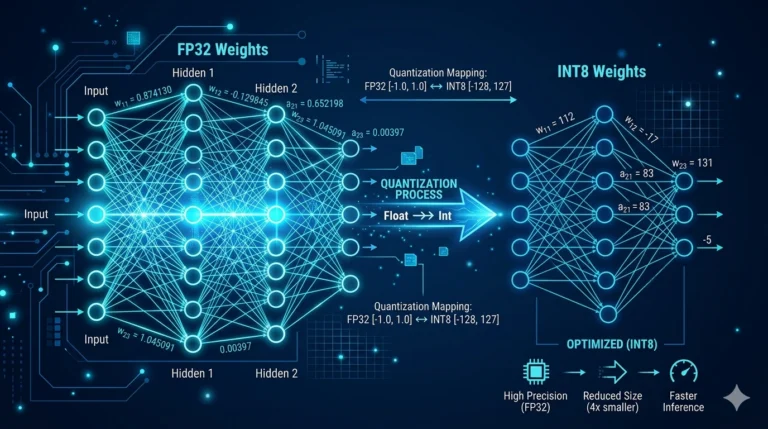

In a conventional language model, every parameter, every learned weight, is activated for every word and every sentence. If a model has 70 billion parameters, it performs 70 billion operations just to generate a single word. That is extraordinarily resource-intensive, both in energy and time.

MoE models introduce an intelligent routing system. For each incoming piece of text, a gatekeeper examines the input and decides: which experts are needed here? Typically only 2 to 4 experts activate at once from a pool that might include 8, 64, or even hundreds. The rest sit idle. The model can therefore have enormous total capacity, say 400 billion parameters, while using only around 50 billion for any given task.

Back to the kitchen: the restaurant with eight chefs has a higher total payroll than the one with a single cook. But serving any one table only costs the salary of the one specialist who handles that dish. Guests get their food faster. The electricity bill is lower.

GPT-4 and the Great Guessing Game

When GPT-4 launched in spring 2023, OpenAI revealed unusually little about its internal architecture. No parameter count, no training data details, no structural description. That silence was itself unusual for an announcement of this magnitude, and speculation started almost immediately.

Over the following months, leaked and semi-confirmed reports led the AI community to a widely shared conclusion: GPT-4 is built on an MoE architecture. The most cited hypothesis holds that the model consists of eight experts, each with roughly 220 billion parameters, with two activating at a time, giving a total of around 1.76 trillion parameters but only approximately 440 billion in active use at any moment.

The reasoning behind this hypothesis was partly technical inference. GPT-4’s combination of performance quality, response diversity, and speed pointed simultaneously to massive capacity and efficient activation, which is precisely the fingerprint of an MoE system. Contributing to the speculation was a comment by OpenAI CEO Sam Altman in a podcast, where he said, in terms that invited interpretation, that GPT-4 was not a single massive model.

It is worth being clear: OpenAI has never officially confirmed this architecture. The hypothesis remains the inference of the technical community. What is certain is that from that point on, MoE was no longer an obscure research concept, and competitors began openly embracing it.

Who Openly Admits to Using MoE?

French AI company Mistral AI released its Mixtral 8x7B model at the end of 2023 and explicitly described its MoE structure: eight experts, two active at a time. The results were striking. On tasks where specialized knowledge mattered, it outperformed much larger conventional models.

Google DeepMind confirmed the use of MoE architecture in Gemini 1.5 Pro. Meta’s open-source model family has also moved in this direction. MoE is no longer experimental. It is now a foundational component of many systems at the frontier of AI development.

What Does This Mean for Accountants, Lawyers, and Doctors?

In plain terms: more value from the same, or less, computational resources. When a firm deploys a local AI solution for medical documentation, contract analysis, or accounting data extraction, a model built on MoE architecture is not just smarter in the abstract. It is genuinely more economical. Less energy, lower hardware requirements, faster responses.

For law firms, the advantages of specialization are particularly relevant. An MoE model can be trained so that certain experts focus specifically on tax law texts, others on civil litigation documents, others on EU regulatory material, all while the user interacts with a single unified system.

Think of it as a large law firm. There is no single all-knowing attorney. Instead there are practice groups: tax, real estate, employment, corporate. A client walks in, and the receptionist, the gatekeeper, immediately knows which desk to send the file to. The client’s experience: one firm, exactly the right expertise.

The Drawbacks: What Not to Overlook

As with any technology, there are real limitations. Training MoE models is significantly more complex than training conventional dense networks. The gatekeeper itself has to learn, and if it learns poorly, the entire system can go wrong: some experts become overloaded while others are barely used.

Load balancing is a genuinely difficult challenge. If the same two or three experts receive nearly every query, the benefits of specialization disappear. Researchers use various regularization techniques to ensure work is distributed more evenly across the specialist pool.

There is also a memory cost. Although fewer parameters are active at any moment, all of them must remain loaded in memory so that whichever expert is needed can respond instantly. This creates infrastructure requirements that sit on the operator side rather than the user side, invisible to the end user but very visible to the system administrator.

Why This Concept Is Worth Knowing

MoE is not an abstract engineering concept that only matters in research labs. A significant share of the AI assistants, document analysis tools, and automated systems that accountants and lawyers use daily are already built on this principle, or soon will be.

Understanding the basic idea helps you make better judgements: about what to ask of these systems, when it is worth choosing between different models, and why a generalist tool sometimes underperforms compared to a purpose-built alternative on a specific professional task.

The AI landscape is changing fast. The spread of MoE architectures means that the next generation of AI systems will be simultaneously larger and more efficient. The question is not whether this is coming. It already has.