RAM vs CPU: Why Memory Trumps Processing Power?

What really happens when your AI thinks

When talking about computer performance, the usual reflex is to look at the processor: “how many GHz? how many cores?” This is logical for many tasks — video editing, gaming, running a complex spreadsheet.

But for running a language model like those used by ArkeoAI, this logic is completely reversed. It is not the processor speed that determines whether things work — it is the amount of available RAM. And the difference is not trivial: a model may simply refuse to start if RAM is insufficient, regardless of CPU speed.

Here is why.

An AI model is, above all, a very heavy object

A language model such as Mistral, LLaMA, or Gemma is not a conventional program. It is a file of mathematical parameters — billions of numbers called weights — that encode everything the model “knows”. These weights are the result of training on astronomical quantities of text.

The size of these files is directly proportional to the model’s capability. A small 7-billion-parameter model weighs around 4 to 8 GB. A more capable 13-billion-parameter model easily exceeds 10 to 16 GB. And large 70-billion-parameter models can reach 40 to 60 GB.

This raw weight must be held somewhere during execution. And that place is RAM.

Why everything must fit in RAM

The processor stores nothing — it computes

The role of the processor (CPU) is to perform calculations: additions, multiplications, comparisons. It is very fast, but it retains nothing. To perform a calculation, it must fetch data from memory, process it, and return the result.

Think of a chef in a kitchen. The processor is the chef. They can peel, chop, and mix very quickly. But they cannot hold 40 kilos of ingredients in their hands at once. They need a large enough worktop to lay out everything they need within reach.

That worktop is the RAM.

The hard drive problem: speed

You might wonder: if the model doesn’t fit in RAM, why not use the hard drive? Technically, this is possible — it is what is called swap or disk offloading. In practice, it is almost unusable.

A conventional hard drive (HDD) is around 100,000 times slower than a RAM stick for accessing data. Even a fast NVMe SSD is still 10 to 50 times slower than RAM. To generate a single word of response, the model must consult its parameters tens of thousands of times. If each consultation requires fetching data from disk, the delay becomes unbearable.

Concrete result: a model partially on disk can take several minutes to generate a single sentence. It is no longer an assistant — it is a waiting exercise.

What concretely happens during an inference

When you ask the AI a question, here is what technically happens, in simplified terms:

- Your question is tokenised — broken into small text units called tokens.

- These tokens are transformed into numerical vectors.

- These vectors pass through the layers of the model — dozens, sometimes hundreds, of matrix computation layers. Each layer uses a portion of the model’s weights.

- At the output of each layer, the result is used as input for the next.

- At the end of the process, the model produces one response token. Then starts again for the next token, and so on until the end of the response.

At each step, the processor must access the weights corresponding to the current layer. If these weights are not in RAM, they must be loaded from disk — and everything stalls. The entire model must therefore be loaded into RAM before the first response even begins to appear.

The CPU is not useless — but it is not the bottleneck

This does not mean the processor is irrelevant. It does perform the mathematical operations of each layer — and a faster or more powerful CPU produces tokens more quickly, once the model is loaded.

But here is the real priority hierarchy for running an LLM on a standard computer:

- Priority 1 — Sufficient RAM: without this, the model does not start or becomes unusable.

- Priority 2 — Memory bandwidth: the speed at which the CPU can read RAM matters as much as the processor frequency itself.

- Priority 3 — CPU speed: increases token throughput per second once the model is correctly loaded.

This is also why GPUs (graphics cards) are so effective for AI models: they have built-in memory (VRAM) with exceptionally high bandwidth, capable of serving model weights to the graphics processor at a speed that a standard CPU with conventional RAM cannot match.

How much RAM do you actually need?

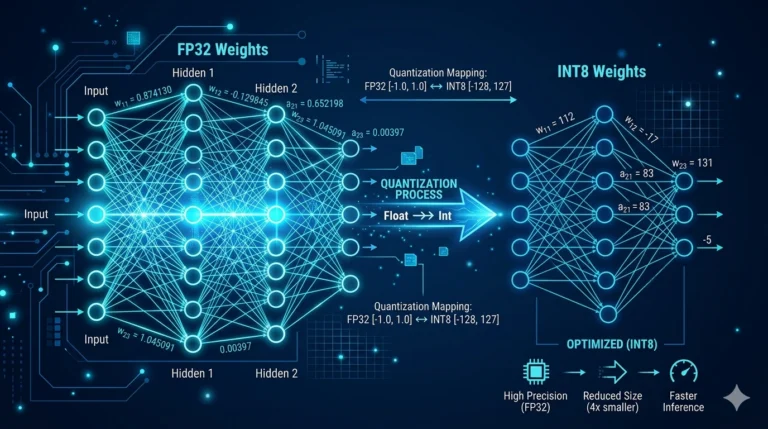

A practical rule for estimating RAM requirements: count approximately 1 GB of RAM per billion parameters, using quantised (compressed) models in 4-bit format. With less aggressive compression (8-bit), double this figure.

- 7B model quantised 4-bit: around 4 to 5 GB of RAM — runs on a standard desktop computer with 8 GB of RAM.

- 13B model quantised 4-bit: around 8 to 10 GB — requires 16 GB of RAM to run comfortably.

- 34B model quantised 4-bit: around 20 GB — requires at least 32 GB of RAM.

- 70B model quantised 4-bit: around 40 GB — requires 64 GB or more.

This is why the mini PCs used by ArkeoAI are systematically configured with 32 to 64 GB of RAM — not out of excess caution, but out of functional necessity. This is what makes it possible to run models capable enough for serious professional use, without depending on the internet, without sending your data outside.

In summary

A language model is, above all, a heavy object to carry in memory. Before computing anything, the entirety of its parameters must be instantly accessible — and only RAM offers the speed necessary for this.

A fast processor with too little RAM is like a competition chef in a kitchen with no worktop: they may have the most agile hands in the world, but they cannot work. Conversely, a machine with plenty of RAM and a modest CPU will produce slower responses — but it will produce responses.

RAM is the necessary condition. CPU speed is the optimisation. In that order, not the reverse.