Why Cloud AI Is Risky When Confidential Data Is Involved?

What you send to the cloud no longer truly belongs to you

When a lawyer, notary, or accountant uses an online AI tool, something happens that many people are unaware of: the data entered — questions, document excerpts, client names — passes through external servers, often located outside the European Union.

What the GDPR says

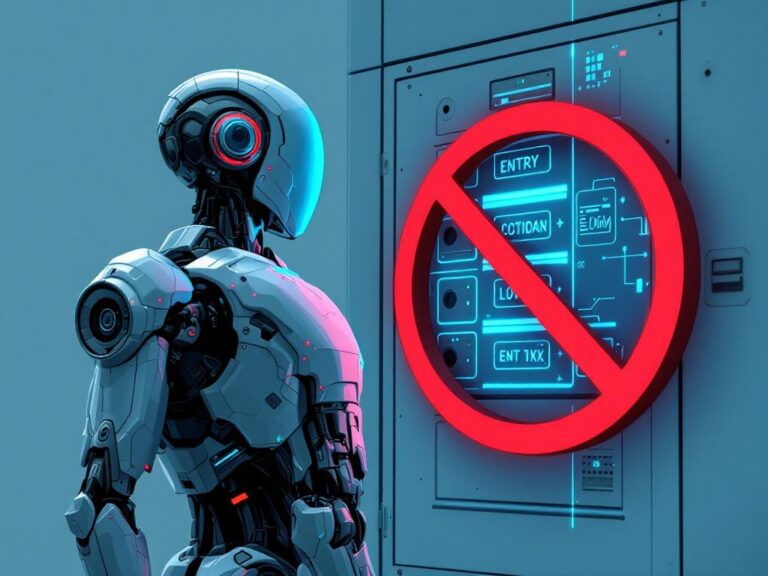

The General Data Protection Regulation imposes strict obligations on professionals who process personal data. Using an American cloud service to analyse a contract or prepare a client file may constitute a data transfer outside the EU — which, without appropriate contractual guarantees, is illegal.

In 2024, the French data protection authority, CNIL recorded a 20% increase in security incidents reported by SMEs. Professional firms are among the most exposed targets, precisely because they hold sensitive and confidential data.

Professional secrecy at stake

For a lawyer, professional secrecy is not optional — it is a deontological obligation. Submitting file elements to an online tool, even inadvertently, may constitute a breach of that secrecy. The same risks apply to notaries, doctors, and accountants.

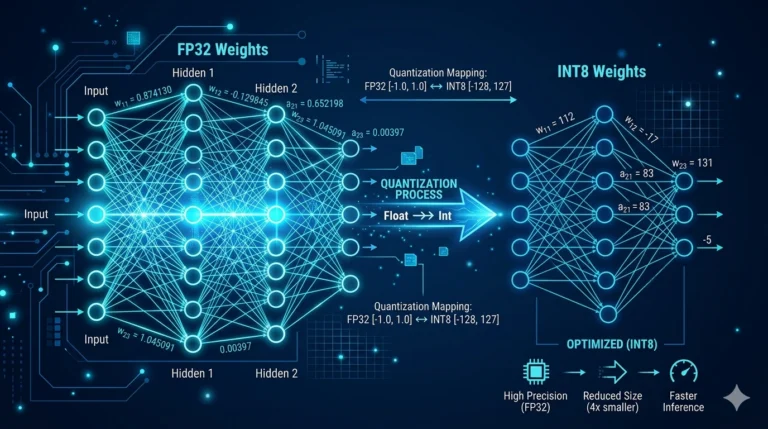

The alternative: keeping data in-house

A local AI solution — installed directly on a workstation or mini server within the firm — transmits no data externally. The questions asked, the documents analysed, the answers generated: everything stays within the firm’s walls.

This is not a matter of distrust towards technology. It is a matter of professional responsibility.

Your client data deserves to stay where it has always been: with you.